Using Generative AI for Research

Introduction

Generative artificial intelligence (gen AI) can process and summarize large amounts of information quickly. This makes it a powerful tool for public sector research. Tasks that take substantial time like finding relevant studies, reviewing long reports, organizing sources, cleaning data, or drafting summaries, can now be completed much faster with the support of AI tools.

As a result, researchers can spend less time searching for information and more time interpreting evidence, forming insights and advising decision-makers. However, speed alone does not equal quality. Not all AI tools work the same way, and not all AI-generated information can be trusted without verification.

Public servants must use generative AI responsibly. Government research must meet high standards of accuracy, transparency and integrity.

This article explores how generative AI can support research work, how to evaluate AI-generated information, and guide its responsible use in research.

How Gen AI Fits in the Research Lifecycle

Gen AI works best as a research assistant, not a researcher or decision-maker. It can support many routine or time-intensive tasks, but it cannot replace human judgment, subject matter expertise and accountability, or contextual understanding.

At its best, generative AI helps researchers:

- find and scan relevant sources

- summarize long articles and reports

- organize and cluster information

- identify themes, patterns, or gaps

- support basic data analysis and visualization

- draft outlines and improve clarity of writing

At its limits, gen AI struggles with:

- understanding political, social, or cultural contexts

- making value-based or policy judgments

- assessing public interests or risks

- knowing what matters most to intended populations

- taking responsibility for errors or harm

- evaluating and prioritizing the quality of sources

Practical Uses of Generative AI in Public Sector Research

Generative AI can support multiple stages of the research process, from identifying sources to communicating findings. These tools can help researchers work more efficiently but do not replace professional judgment or verification.

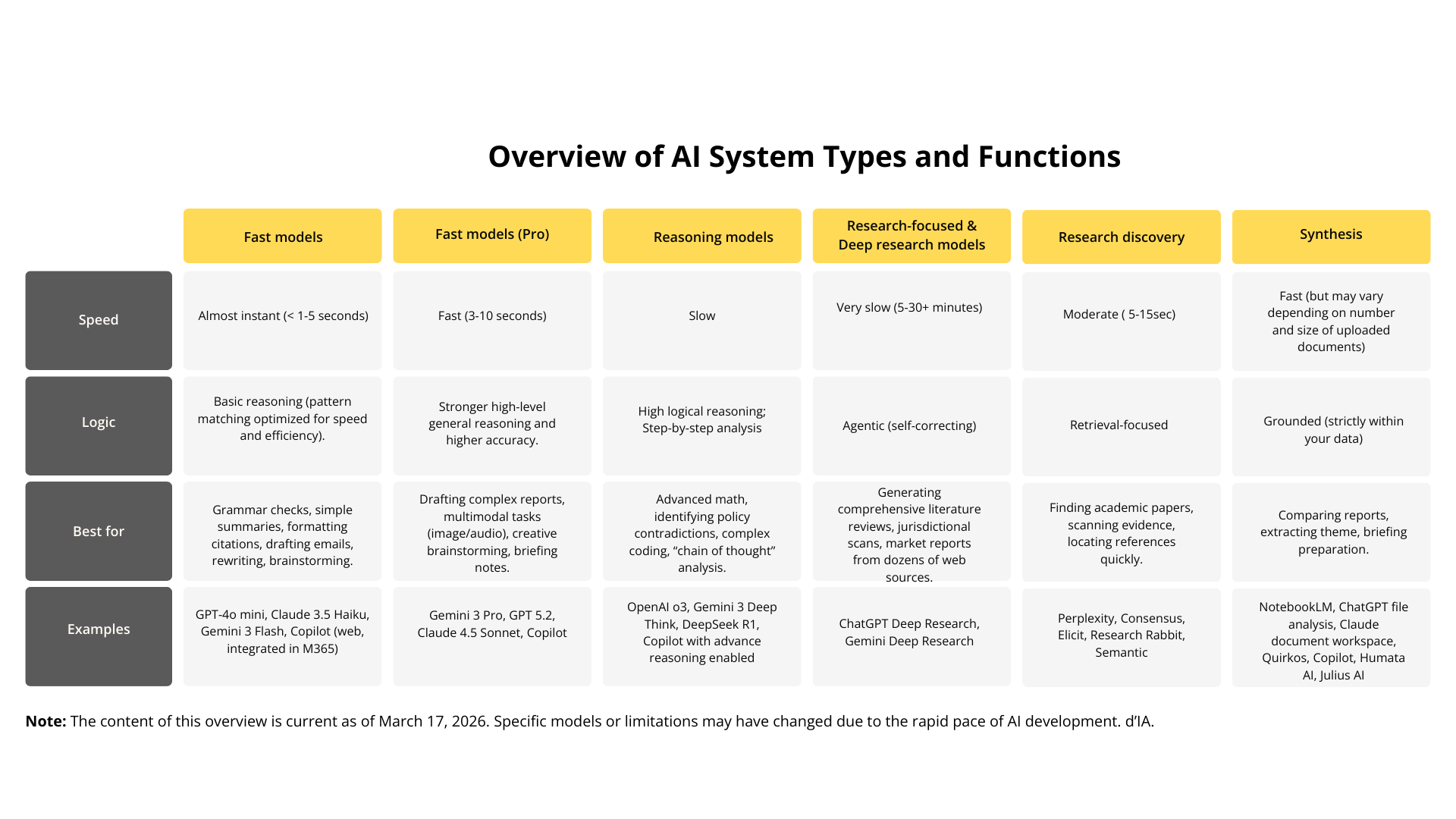

Descriptive text

- Fast Models

- Speed: Almost instant (< 1-5 seconds).

- Logic: Basic reasoning; optimized for speed and efficiency.

- Best for: Grammar checks, simple summaries, drafting emails and brainstorming.

- Examples: GPT-4o mini, Claude 3.5 Haiku, Gemini 3 Flash, Copilot (web).

- Fast Models (Pro)

- Speed: Fast (3-10 seconds).

- Logic: Stronger high-level general reasoning and higher accuracy.

- Best for: Drafting complex reports, multimodal tasks (image/audio) and briefing notes.

- Examples: Gemini 3 Pro, GPT 5.2, Claude 4.5 Sonnet, Copilot.

- Reasoning Models

- Speed: Slow.

- Logic: High logical reasoning; uses step-by-step "chain of thought" analysis.

- Best for: Advanced math, identifying policy contradictions and complex coding.

- Examples: OpenAI o3, Gemini 3 Deep Think, DeepSeek R1.

- Research-focused & Deep Research Models

- Speed: Very slow (5 to 30+ minutes).

- Logic: Agentic and self-correcting.

- Best for: Generating comprehensive literature reviews, jurisdictional scans and deep web research.

- Examples: ChatGPT Deep Research, Gemini Deep Research.

- Fast Models (Retrieval-Focused)

- Speed: Moderate (5-15 seconds).

- Logic: Focused on finding and citing external information.

- Best for: Finding academic papers, scanning evidence, and locating references quickly.

- Examples: Perplexity, Consensus, Elicit, Research Rabbit.

- Fast Models (Grounded)

- Speed: Fast (varies by the size of uploaded files).

- Logic: Grounded strictly within the user's provided data.

- Best for: Comparing internal reports, extracting themes and briefing preparation.

- Examples: NotebookLM, ChatGPT file analysis, Claude document workspace, Quirkos, Humata AI.

- Fast models are best for checking grammar, helping with drafting, simple summarizing and editing.

- Fast models (pro versions) help for general reasoning, complex drafting and following detailed instructions.

- Reasoning models help analyze complex questions, math problems, deep coding tasks and compare evidence.

- Research-focused models and deep research models help locate and synthesize information across multiple sources.

- Research discovery tools help identify relevant academic and policy literature.

- Synthesis tools help organize and analyze collections of documents.

Researchers often use a combination of these models depending on the task.

1. Literature reviews and evidence scanning

AI tools can quickly identify relevant studies, summarize key findings and group-related research. This can significantly reduce the time needed to scan large amount of literature.

How:

- Define your research question, scope, timeframe and keywords.

- Ask the AI system to identify relevant studies and summarize key findings.

- Request grouping of sources by theme, policy implications, or gaps.

- Use discovery tools to locate original sources and verify citations.

Prompt example

Using your deep research mode, identify the top 10 peer-reviewed studies from the last five years regarding universal basic income pilots in North America. Specifically, look for data on workforce participation rates. Provide a summary of findings for each study and group them by economic impact and social well-being. Include direct links to the original for verification.

How to use responsibly:

- Treat AI summaries as starting points.

- Always verify sources and citations.

- Read original material before drawing conclusions.

- Provide as much clear, relevant detail as possible in your prompt so the AI can draw from sources more strategically and effectively.

2. Making sense of complex material

Gen AI can help summarize complex documents, highlight recurring themes and map connections between ideas. This is useful for orientation, briefing preparation and early analysis.

How:

- Upload or paste reports, legislation, final policy, or technical documents.

- Ask for summaries tailored to your needs (for example, key findings, implications).

- Request comparisons across multiple documents.

- Use synthesis tools to organize and extract insights.

Prompt example

I've uploaded three separate municipal housing strategy reports. Using these as your only source of information, create a comparison table that highlights: 1) their definition of affordability, 2) proposed zoning changes, and 3) identified barriers to implementation. After the table, list any recurring themes that appear in all three documents.

How to use safely:

- Check summaries against original texts.

- Watch for missing nuance or oversimplification.

- Do not rely on AI to resolve conflicting evidence.

- Do not upload content that is protected, draft, or sensitive in any way.

3. Working with data

Some AI tools can assist with cleaning data, exploring patterns and creating simple visualizations. These capabilities can support exploratory analysis and hypothesis development.

How:

- Describe your dataset and research objective.

- Ask the AI to suggest ways to organize or analyze the data.

- Request summaries of trends or potential patterns.

Prompt example

I am reviewing data of 12 neighbourhoods over the last two years. Using the attached file, please point out any seasonal patterns or unusual dips in the numbers. Based on the data, suggest three potential reasons for these drops that I should look into, and include the analysis code you used to find them.

How to use safely:

- Validate findings using established tools and methods.

- Avoid using AI outputs as final analytical evidence.

- Ensure sensitive data is protected.

4. Writing and communication support

AI can help improve clarity, grammar and structure. It can also assist with drafting outlines or rephrasing content in plain language and formatting references.

How:

- Provide draft text, notes, or outlines.

- Ask the AI to improve clarity or create plain-language summaries.

- Request alternative versions for different audiences.

- Use fast models for editing and formatting and reasoning models for refining complex arguments.

Prompt example

I have written a technical briefing on zero-emission transit planning (pasted below). First, use your reasoning capabilities to check if the transition between my third and fourth arguments is logical. Second, rewrite the executive summary into plain language suitable for a general audience at a grade 8 reading level, avoiding all technical jargon while keeping the core recommendations intact.

How to use safely:

- Review all content for accuracy and tone.

- Confirm references and quotations.

- Maintain authorship and accountability.

Evaluating Information Generated by AI

Gen AI does not "know" facts. It generates responses based on patterns in data. This means it can:

- sound confident while being wrong

- invent sources, quotes, or details

- reflect biases in its training data

For this reason, AI-generated content should always be treated as unverified information. The same critical thinking skills used to evaluate traditional sources apply to AI outputs.

Frameworks such as SIFT and RADAR can help you assess whether the information you use in your research is reliable. You can also consult the course Untangling Misinformation and Disinformation for additional strategies on identifying false, misleading, or manipulated content.

SIFT method

The SIFT method was created by Mike Caulfield at the University of Washington. It is a four-step approach to evaluating information to determine whether online content can be trusted for credible or reliable source of information.

- Stop: Before you read or share an article or video, pause and think critically about the information.

- What do you know about the source of the information and its reputation?

If you don't have that information, use the other steps to get a sense of what you're looking at. Don't accept the information until you know what it is.

- Investigate the source: Who created the information and why? Take a moment to look up the author(s) and source(s) before accepting or sharing the information.

- What can you find about the author(s) or website creator(s)?

- What is their agenda?

- Do they have financial ties, political leanings or personal biases that would bias their assessment?

- Are they reputable expert in the field?

Taking a few minutes to figure out where the information is from before reading will help you decide if it is worth your time, and if it is, help you to better understand its significance and trustworthiness.

- Find better coverage: Check other credible sources to see whether there is agreement.

- Are there other sources that confirm this information or challenge it?

- Can you track the original source?

- If you are dealing with an image, do a reverse image search to reveal whether or not an image has been altered or copied from elsewhere on the internet. You can do this by copying the image or the image's URL into the search bar of an image search tool like TinEye or Google lens.

Once a common understanding is found, do you need to agree with it? Absolutely not! However, understanding the context and history of the information will help you better evaluate it and form a starting point for future investigation.

- Trace claims, quotes and media to the original context: Review the original context. If no source is provided, investigate further, as this may be a warning sign.

- Where did the information originate?

- Does the original source support what is being said?

- Is the claim, quote, or media shown accurately?

- Has the information been selectively used to support a particular viewpoint?

- Has anything been taken out of context?

Online information can lose meaning when share without context. Tracing claims, quotes, and media back to their original sources helps confirm whether they are accurate, fairly presented, and not taken out of context.

RADAR method

The RADAR method was introduced by Jane Mandalios from the American College of Greece. It is a five-step approach to help evaluate information. The following segment has been adapted from Mandalios' paper, published in the Journal of Information Science in 2013.

- Relevance: Information must be relevant to be useful for your research. If it does not clearly support your topic or research question, it may not be worth using.

- Does this information answer my research question?

- Is it clearly related to my topic?

- Who is the intended audience?

- Authority: Evaluating who created the information is critical to assessing its credibility. This includes the author, publisher and any organization responsible for the content.

- Who created this information, and who do they cite?

- Does the author have relevant expertise or experience?

- Is the author affiliated with a credible institution or organization?

- Is this author cited by others?

- Is contact information available if clarification is needed?

- Date: The timeliness of the information matters, but newer is not always better. The importance of the publication date depends on your topic and field.

- When was the information published or last updated?

- Do I need the most current information for this topic?

- Is the information outdated or still accurate and relevant?

- Can it be used to provide historical context?

- Appearance: The way the information is presented can signal its reliability.

- Is the information presented in a professional format?

- Does it follow conventions such as abstracts, citations, or references?

- Is it published in a peer-reviewed journal or reputable source?

- Are sources cited and do they appear credible?

- Are advertisements present, and do they affect credibility?

- Reason for writing: Identifying why the information was created helps identify potential bias or limitations.

- Why was this information published?

- Is it meant to inform, educate, persuade, entertain, or sell something?

- Is the purpose clearly stated?

- Is the information objective and balanced?

- Are methods, data, or evidence clearly explained when relevant?

RADAR is not a simple pass or fail test or a final decision-making tool. It is meant to help you compare and assess the overall quality of information as you search and evaluate sources.

Sources that are biased, opinion-based, or even inaccurate can still be useful in research when they are used intentionally. For example, to illustrate opposing viewpoints or common misconceptions. When using these sources, it is important to clearly identify their limitations and place them alongside more balanced and credible evidence.

Considerations for Generative AI in Research

While generative AI is increasingly being used in research, it also raises important concerns. Understanding these considerations helps researchers approach the use of these tools more carefully and responsibly, especially in public sector context where trust, transparency, and accountability are essential.

Use of content without consent

Many generative AI models are trained on large collections of text, images and data gathered from across the internet. In some cases, this training data may include copyrighted, proprietary, or personal content used without explicit consent.

Why does this matter for research?

- It raises question about intellectual property and fairness.

- It may conflict with government values around ethical sourcing.

- It can create uncertainty about whether outputs can be reused or published.

Example: An AI tool generates a well-written paragraph that closely mirrors a paywalled academic article. If reused without verification, this could unintentionally violate copyright or attribution rules.

Guidance

- Treat AI outputs as inspirational, not original sources.

- Avoid copying AI-generated text directly into published work.

- Always verify and cite original, authoritative sources.

- Use models that have more ethical practices for collecting training data.

Lack of transparency

Many AI systems operate as "black boxes". While they can produce fluent answers, they often cannot explain how or why a particular output was generated.

Why this matters for public sector research

- Government research mush be defensible and explainable.

- Decision-makers may ask how conclusions were reached.

- Lack of transparency makes errors hard to detect.

Example: An AI tool suggests a trend in service usage but cannot explain which data points or assumptions led to that conclusion.

Guidance

- Use AI for exploration, not final evidence

- Use grounded AI tools. A grounded tool connects its responses to trusted external sources (such as documents, databases, or cited references) instead of relying only on the model's training data. Many grounded tools use Retrieval-Augmented Generation (RAG), meaning they retrieve relevant information before generating an answer and typically show or cite the sources used.

- Signs that a tool is grounded include: providing citations or links, allowing you to trace answers back to source material, or connecting you or organizational data or approved datasets.

- Be cautious when AI outputs influence decisions or policy advice

- Use tools and methods that allow for traceability and review

AI-generated content polluting the information ecosystem

As AI-generated content becomes more common, it increasingly feeds back into the internet where future AI systems may train on it. This can create a cycle where errors, bias, or low-quality information are repeated and amplified.

Why this matters

- Information quality may decline over time.

- It becomes harder to distinguish original research from synthetic content.

- Weak or incorrect information may appear credible.

Example: An AI model summarizes several online articles that were themselves written by AI, reinforcing unverified claims as if they were established facts.

Guidance

- Prioritize primary sources and original research.

- Be cautious with information that lacks clear authorship.

- Actively seek diverse and credible perspectives.

Limited understanding of the real world

Generative AI does not have lived experience, situational awareness, or an understanding of social and political consequences. It cannot fully grasp context, nuance, or impact.

Why this matters

- Public sector research often affects real people and communities.

- Context matters as much as data.

- AI cannot assess ethical or societal trade-offs.

Example: An AI tool proposes a "cost-effective" policy option without recognizing its disproportionate impact on vulnerable populations.

Guidance

- Apply human judgment to interpret AI outputs.

- Consider social, cultural and policy context.

- Test AI-generated insights against real-world knowledge.

Deepfakes and synthetic evidence

Generative AI can create highly realistic text, images, audio and video. While this can support creative work, it also increases the risk of misinformation, fabricated evidence and loss of trust.

Why this matters

- Research relies on credible evidence.

- Synthetic content can be difficult to detect.

- Trust in institutions can be undermined.

Example: An AI-generate quote or dataset appears authentic but has no real world source.

Guidance

- Verify the authenticity of all evidence.

- Avoid using AI-generated content as proof.

- Treat synthetic media with heightened scrutiny.

Principle of Using Generative AI: FASTER Framework

To manage these risks, public sector researchers can rely on clear principles that guide responsible use. The GC's Guide on the use of generative AI provides the FASTER framework, which applies to research as well:

- Fair – avoid reinforcing bias or exclusion.

- Accountable – human remain responsible for outcomes.

- Secure – protect sensitive and personal information.

- Transparent – be open about when and how AI is used.

- Educated – understand assumptions and limitations.

- Relevant – use AI only where it adds clear value.

If you cannot explain how AI supported your research or defend its role, you may be relying on it too heavily. AI use should be documented, reviewed, and justified, especially in policy or decision-support contexts. To find out more about other risks presented by generative AI tools, consult the article Understanding AI Safety in Government.

Generative AI and the Future of Public Sector Research

Generative AI will continue to reshape practices. While tools will become more powerful, core research skills will remain essential. Researchers will need:

- strong critical thinking skills.

- information evaluation skills.

- ethical awareness skills.

- clear communication skills.

AI may increase capacity, but it cannot replace professional responsibility or public accountability.

Conclusion

Generative AI is neither a solution nor a threat on its own. It is a tool. Its impact depends on how it is used. Used wisely, it can reduce administrative burden, support deeper analysis, improve clarity and accessibility in research work. When used without care or oversight, it can spread misinformation, weaken research quality and undermine trust in government.

For public sector research, the goal is to use AI thoughtfully in ways that support researchers, increases productivity and improve the quality of their work. Generative AI should support tasks such as searching, summarizing, and organizing information, while human judgment remains essential for interpretation, validation, and decision-making. Research outputs should always be AI-assisted not AI-generated, with clear human guidance and accountability.

This is a rapidly evolving area. Tools, practices and expectations will continue to change. Public servants should regularly consult updated guidance, trusted resources and subject-matter expertise. Even when sources are provided, AI tools can misinterpret information or present it in misleading ways. Reviewing original sources and applying professional judgment must remain central to the research process.

By applying critical thinking, clear principles, and careful review, researchers can use generative AI in ways that support rigorous research while protecting the integrity of their work and the trust of the public they serve.

Resources